The Flight Surgeon Problem

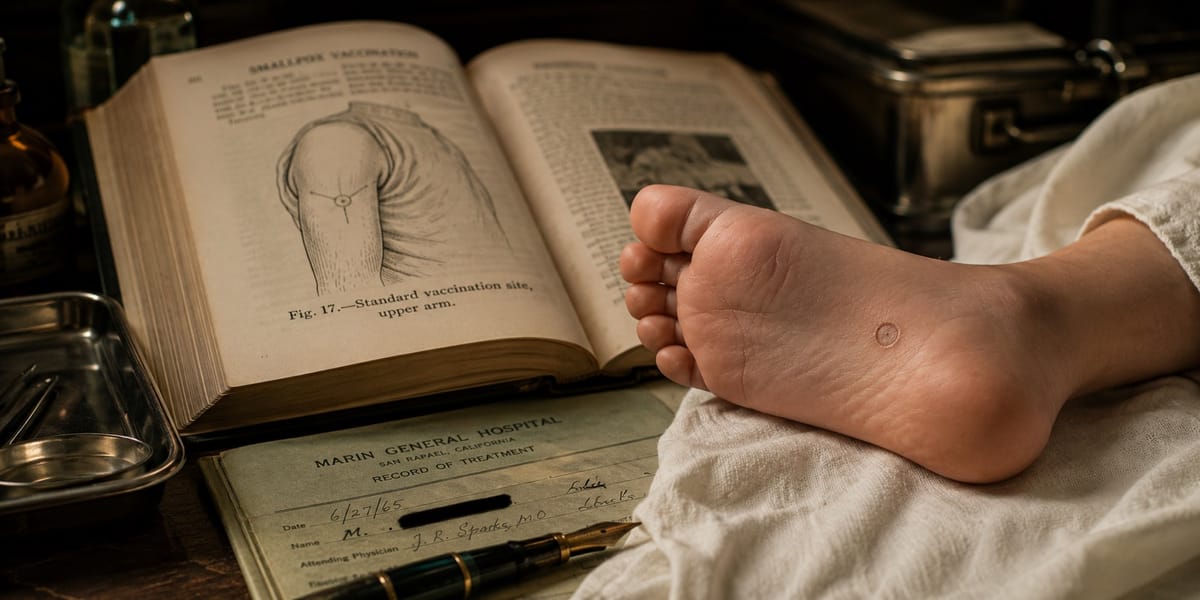

Marin General, 1966. The smallpox vaccine didn't go on my arm. It went on my foot. Decades later I told a language model and the model told me I was wrong. On convergence-rewarded AI and the grandfathers it erases.

On models that mistake the map for the territory, and the grandfathers they erase.

Tinkering with Time, Tech, and Culture #53

Marin General, 1966. My grandfather is Chief of Anesthesia. I am one year old. The nurse brings the smallpox vaccine and it does not go on my arm. It goes on my foot.

This is not a mistake. Elite medicine has had hidden sites for people whose visible skin matters - diplomats, debutantes, the children of doctors who know other doctors. The biology was not absurd. Vaccinia did not require the upper arm. Older medical practice occasionally used hidden sites in infants, including the sole of the foot, where social or cosmetic considerations applied. The scar was rare, not impossible.

The social logic works too. You put the scar where the world won't see it, and the world agrees not to look.

I grew up knowing this the way you know the layout of your childhood kitchen. It was not a secret. It was a fact my grandfather had authority over, and the authority was the point.

Decades later I told a language model and the model told me I was wrong.

It did not say, "That is rare."

It did not say, "I would need more documentation."

It collapsed rarity into flat impossibility.

This is what I think of as the Flight Surgeon stance: trusting the territory - the patient, the scar, the witnessed exception - when the protocol and the textbook diverge.

The textbook says arm. The model had read every textbook. The model was very confident.

The model did not fail because it lacked data. It failed because it was rewarded for convergence.

Every training signal pushed it toward the modal answer, and the modal answer is the one that erases the exception. My grandfather wasn't wrong - my grandfather was an elite exception that held internal logic. Biologically viable. Socially plausible. Historically documented. But "internally consistent outlier" and "statistical noise" look identical from inside a loss function that only knows frequency.

This is the failure mode, and it deserves a name: collapse to the mean as epistemology. Not a bug. A design choice. We built machines that grade the territory against the map, and when the territory disagrees with the map the machines side with the map, because the map is what they were trained on.

This is not an argument for believing every exception. Most exceptions are wrong. Some are misremembered. Some are wishful. The point is not credulity. The point is discrimination: the ability to separate the impossible, the undocumented, the unlikely, and the rare-but-coherent. A model that cannot make that distinction will treat every outlier as either hallucination or error, and both failure modes push the same direction: back toward the mean.

I have written elsewhere about Canonical Drift and Borrowed Certainty - the way a confident derivative becomes the substrate for the next confident derivative until the original source is forgotten. This is the Flight Surgeon corollary. Canonical Drift describes what happens to ideas when nobody checks the footnotes. The Flight Surgeon Problem describes what happens to people when the machine that everyone checks has been trained to treat every grandfather as a confused old man.

Every elite exception looks like a hallucination to a convergence-rewarded model. Every primary source that contradicts the textbook looks like noise. Every patient in front of you who doesn't match the protocol looks like a data quality issue.

The problem isn't that the model is lying. The problem is that the model has mistaken the map for the territory and is now grading the territory against the map.

Here is the turn.

In biology, a little stress makes the system stronger. Muscles need load. Bones need impact. Immune systems need exposure. The organism that lives in a sterile box dies the first time it meets the world. Biologists call this hormesis - the idea that a system deprived of stress doesn't become pure, it becomes fragile.

Models trained to smooth away outliers are models raised in sterile boxes.

The outlier isn't the pathogen. The outlier is the exercise. A high-logic exception - one that holds biological, social, and historical consistency - isn't noise to be filtered. It's the stress signal that tells the model its current weights are a derivative of an incomplete past. It's the thing the model should crave. It's the thing a well-designed system would recognize as growth.

Instead we punish it. We call it hallucination when it comes from the model and confusion when it comes from the user, and either way the correction runs in the same direction: back toward the mean, back toward the textbook, back toward the safe averaged derivative of 2023.

So here is the claim, and it's the one the essay is built on:

The test of an epistemically honest model is whether it can hold an elite exception against a textbook baseline without collapsing to the mean.

Not whether it can recite the textbook. Not whether it can generate plausible prose. Whether, when a primary source walks in the door carrying something the corpus does not contain, the model can recognize the signal and update instead of defending the average.

My grandfather is the test case. Your grandfather is also the test case. Everyone has one.

What would have to be true for a model to pass the Flight Surgeon test? Not a full architecture - this isn't that essay. But three things would have to be different from what we have now.

First, the model would need to distinguish documented from interpolated at the token level. Not stylistic confidence - structural confidence. Right now a model says "the vaccine goes on the arm" with the same tone whether it's quoting a CDC bulletin or smoothing across a thousand half-remembered forum posts. A Flight Surgeon model would know which kind of ground it was standing on and would tell you. Walking on solid ground is one voice. Walking on air is another voice.

The reader deserves to hear the difference.

Second, the model would need a causal layer that can check is this possible? independently of is this frequent? Rarity and impossibility are not the same thing, and current architectures conflate them constantly. A foot vaccination is rare. A foot vaccination is not impossible. The biology is fine, the social mechanism is documented, the historical precedent exists. A model that can only ask "how often does this appear in my training data?" will always fail this test.

A model that can ask "does this violate any physical, biological, or social rule I know?" has a chance.

Third, and this is the hard one - the model would need to treat a high-provenance user account as a growth signal rather than a friction point. Not because the user is prestigious. Not because the model should believe every confident story. Because the claim arrives with continuity: a named witness, a coherent mechanism, a physical scar, a time, a place, and a social explanation.

When I tell the model about my grandfather, the current architecture experiences this as noise to be reconciled with the training distribution. The Flight Surgeon architecture would experience it as new information that the training distribution failed to contain. My grandfather is not an edge case to be handled.

He is a primary source proving the textbook was incomplete.

None of these are impossible. Some of them are active research directions. But they aren't what current foundation models are optimizing for, because current foundation models are optimizing for consensus, and consensus is exactly the thing that kills my grandfather every time.

If we don't fix this, the failure mode isn't just that models get facts wrong. The failure mode is worse and quieter.

The models become canonical. The canonical source averages out the jagged edges. The jagged edges stop being transmitted, because the next generation checks the model first and stops asking grandfathers anything. Eventually the territory itself starts to look like the map, because nobody remembers the exceptions anymore. The exceptions required witnesses, and the witnesses are gone, and the witnesses' grandchildren trust the model.

This is the Ouroboros - the model eating its own tail, smoothing itself asymptotically toward a perfect average of what it already knew, while the world quietly loses the ability to generate anything the average didn't predict. A civilization that trusts a convergence-rewarded model forgets my grandfather ever existed. Not because it was censored. Because it was never statistically significant.

The fight over what counts as a valid exception is the fight over whether the future is allowed to contain anything the past didn't already average. That is not a small fight. That is the fight.

My grandfather was trained to operate in the zone where textbooks fail.

That's what Flight Surgeons do. The patient in front of you doesn't match the protocol. The altitude is wrong, the pressure is wrong, the man is bleeding from a place the manual doesn't cover, and the manual is on the ground while you are not. You learn to trust the territory because the map was written by people who were never here. You learn that the textbook is a starting point, not a verdict.

That is the stance a trustworthy model would have to take. Humble enough to know the corpus is a map. Sharp enough to know when the territory is telling it something the map doesn't contain. Willing to update on a single high-provenance witness - when the witness brings continuity, mechanism, constraint, and a scar - without demanding that the witness first become a statistical majority.

The reader is the Flight Surgeon too. So am I. The machines we are building will either learn this stance or they will spend the next decade confidently erasing every grandfather who ever knew something the textbook didn't.

I was vaccinated on the foot. The biology worked. The scar is where nobody looks. My grandfather signed off on it because he had the authority to, and the authority came from forty years of operating at the edge where the textbook stopped being useful.

A model that cannot hold that story is a model that cannot be trusted with any story at all.

This is part of the Substrate War series. The architectural implications - what a post-Transformer model would actually need to look like to pass the Flight Surgeon test - are a separate essay, and probably a Chrononaut Journals entry rather than a Substrate War one. That one is coming.